What Is AI Not? — AI Is Not IT

Since the beginning of the computer, AI and IT have been closely related.

Think of Lady Lovelace, who created the first design of an algebraic machine. She was the first to recognize that a machine could be designed with the ability to be programmed to solve problems of any complexity or even compose music. This formed the inspiration for theories on logic that resulted in the programming languages in use today.

She is regarded as the first computer programmer but was most likely thinking about AI, or what we would call today AI, when she said: "The Analytical Engine has no pretensions whatsoever to originate anything. It can do whatever we know how to order it to perform. It can follow an analysis, but it has no power of anticipating any analytical relations or truth."

Her statement has been debated by Alan Turing, another example of the intertwined relationship between AI and IT in one person. He defined a test of a machine's ability to exhibit intelligent behavior. Turing proposed an experimental setup where someone would judge a conversation between a human and a machine, without knowing which of the parties was the human and which the machine. The evaluator would be separated from the communicating parties and be aware that one of them was a machine. The Turing test would be successful if the evaluator was not able to tell the machine from the communicating human. This test, in many variations, still plays a role in defining AI.

At the same time, Turing played a crucial role by creating one of the first computers (the ACE) based on the Von Neuman design: a computer that had a stored-program. This marked the beginning of programmable machines and the start of executing the vision of Lady Lovelace. This is the best example of the intertwined relationship between AI and IT. It is difficult to think of a better example because the programmable computer is the beginning of IT as we know it today. (I will use the terms IT and computer science interchangeably.)

Given this short history of AI and computer science, you may have been surprised by my statement in my previous article: "Computer science and computational theory are not on the list [of disciplines that perform AI research]. While certainly related research areas, they do not get to the fundamentals of problems that AI considers."

AI is a different discipline. Early ideas of intelligent machines did not use computers. Only by the late 1950s did AI researchers start to base their work on programming computers. Today, there is no AI researcher who is not working with a computer, but that is true for every researcher.

The advantages of using computers for AI research is that you can program them in different ways to test ideas and they are fast. That makes them, now, the best means to test AI ideas. But the science to make fast and reliable computers and computer programs is not fundamental to AI. It is a resource — a very important one — but if there were a better resource, we would use it. The other way to think about it: the science to make computers intelligent is not the objective of computational theory.

To conclude: AI and IT are closely related because both are about automating a task, using a computer, that would have otherwise been performed by a human or would require infinite human resources.

Examples of such tasks are:

- Database technology and SQL have a basis in AI research; however, I don't consider a database or any system that collects, stores, and retrieves information as AI or a decision support system.

- A workflow system that ensures all enterprise assets are registered (ERP) from beginning to end, supporting multiple divisions to collaborate, and may include rules to calculate prices and define valid status updates; however, it's not AI.

- The dashboard that provides an overview of the status of a road network, showing travel speed developing over time. It helps me and you to choose the best route and helps traffic managers communicate traffic information or travelers.

These tasks are relatively trivial and static once they are designed. Computer science is about automating these tasks in the most efficient, effective, and fastest way. Many computers, devices, and sensors should be connected. The data they collect has to be transformed into relevant and useful information. All this is IT and not AI.

What about knowledge representation and business rules in IT? A very common IT architecture consists of 3 layers: one for collecting data, a second to aggregate the data into information using business rules, and a third to present, or act on, that information. The 3-tier architecture is not AI, just a good IT practice.

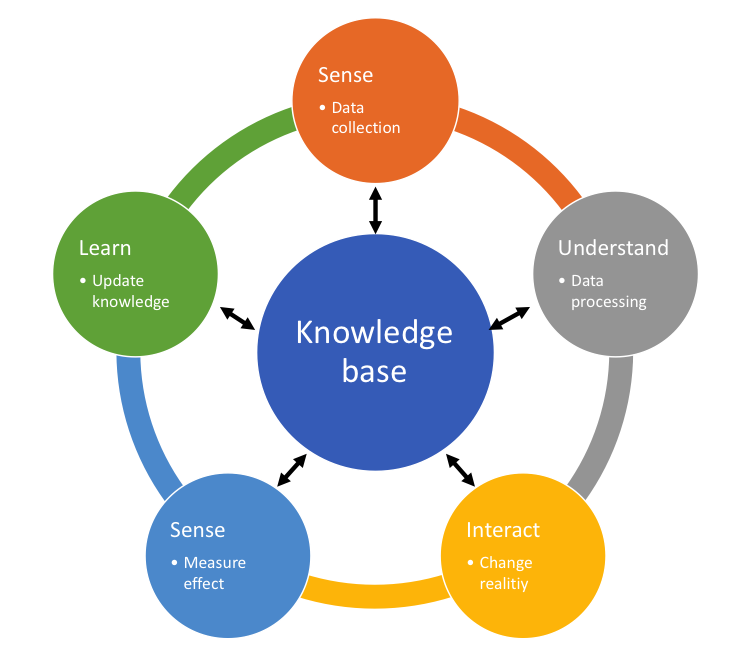

When knowledge is represented in such a way that it drives this process of data collection (sensing), data processing (understanding), and informed decision making (interacting) to change the world, learn, and collect data again, I am confident to say that we are talking about AI. This is a feedback loop:

The AI community also struggled with this concept and came up with two new concepts: narrow AI and strong AI. In narrow (or weak) AI, the focus is on automating just one task. So far this is what we see all around us. Alternatively, strong (or full) AI is about machines that are able to perform any intellectual task that humans can do. Machines have 'general intelligence' to solve any problem and are even able to perform tasks that we don't yet know. This idea is not new but the distinction is used, and has become more popularized, in the past 20 years.

The concept of generalized intelligence is very disturbing for many of us because the research is looking for 'consciousness' as a vital function of strong AI. Unpredictable and subjective behavior of machines is a good ingredient for a thriller, good food for thought, but a bad ingredient for our peace of mind. Luckily there is very little progress in this research area.

XAI suggests something in between. It is still narrow AI, but used in such a way that there is a feedback loop to the environment. The feedback loop may involve human intervention. We understand the scope of the narrow AI solution. We can adjust the solution when the task at hand requires more knowledge or we are warned in a meaningful way when the task at hand does not fit within the scope of the AI solution.

About this series: I have been fighting for years for transparent decisions — decisions that you can explain, that relate to policy, that are part of a PDCA process. Now that the AI hype is at its peak, I have the feeling that I can start all over again. Being potentially destructive, practically incomprehensible, and (for most) unintelligible, it is important to remove any mysticism surrounding these 'solutions'. This is the third article out of a series of 10. After defining what AI is in my previous article, it's equally important to describe what it is not. However, my overall objective is to explain how to create synergy between AI and knowledge-based solutions.

I would really appreciate your feedback.

# # #

About our Contributor:

Online Interactive Training Series

In response to a great many requests, Business Rule Solutions now offers at-a-distance learning options. No travel, no backlogs, no hassles. Same great instructors, but with schedules, content and pricing designed to meet the special needs of busy professionals.

How to Define Business Terms in Plain English: A Primer

How to Use DecisionSpeak™ and Question Charts (Q-Charts™)

Decision Tables - A Primer: How to Use TableSpeak™

Tabulation of Lists in RuleSpeak®: A Primer - Using "The Following" Clause

Business Agility Manifesto

Business Rules Manifesto

Business Motivation Model

Decision Vocabulary

[Download]

[Download]

Semantics of Business Vocabulary and Business Rules